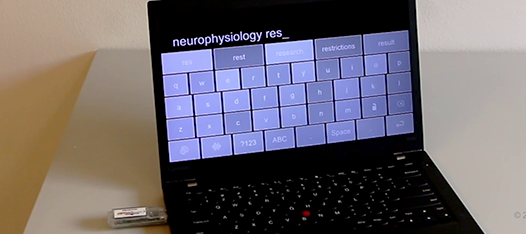

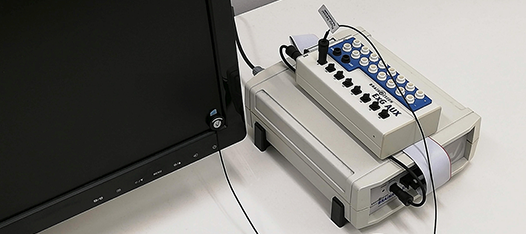

Hyperscanning Setup with 10 X.ons Using LSL and an Auditory Oddball Paradigm

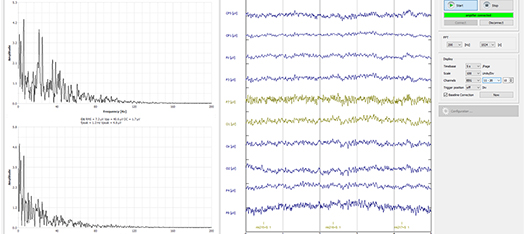

Since X.on was released, common questions include if multiple X.ons can be used together and how many can be used simultaneously. To provide an informed answer, we conducted in-house tests and performed EEG paradigms with real participants using multiple X.ons.